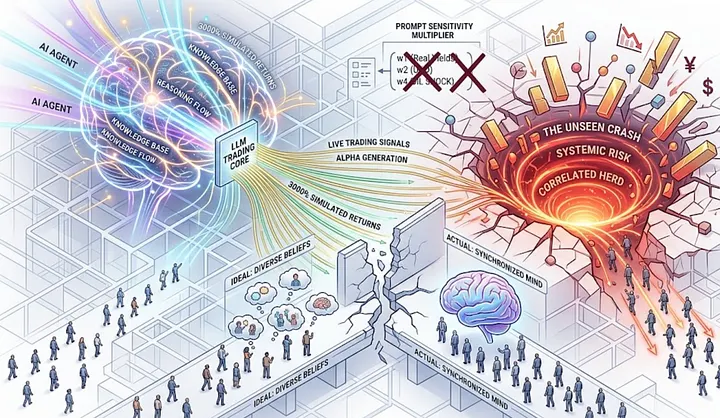

He Let an AI Trade a Fake Stock Market for 72 Hours which made 3000% but then It Nearly Broke Everything.

On the most dangerous and most fascinating development in quant finance right now: LLMs that can trade, think, reason, and accidentally herd themselves into a crash that nobody saw coming.

I need to tell you about a study that has been circulating in quant circles for the past few weeks like a secret that everyone knows but nobody wants to say too loudly.

Not because it is classified. It was published on arXiv. It was presented to European financial regulators. It is sitting there, freely accessible, with an abstract that should genuinely stop you mid-scroll if you spend any time thinking about where markets are going.

Here is the sentence that grabbed me.

Alejandro Lopez-Lira, assistant professor of finance at the University of Florida, ran a simulated stock market where large language models acted as competing trading agents. LLMs demonstrated consistent strategy adherence and could function as value investors, momentum traders, or market makers per their instructions. Market dynamics exhibited features of real financial markets, including price discovery, bubbles, underreaction, and strategic liquidity provision.

And then there is the third finding, the one that Lopez-Lira himself described to risk.net as “very strange, correlated trading behavior”.

The AI agents, all running on similar models, all trained on similar data, all responding to the same market signals with similar reasoning processes, began to move together. When one turned bearish, they all turned bearish. When one spotted a momentum signal, they all spotted the same momentum signal. The simulated market did not exhibit the healthy diversity of opinion that makes real markets function. It exhibited something closer to a synchronized mind, hundreds of agents that appeared independent but were, underneath, drawing from the same well.

I have been thinking about this for weeks. And I want to share what I think it actually means, not just for quant traders, but for every person who has any money in any market anywhere.

Let Me Tell You What These LLMs Actually Did

First, the impressive part, because I think it deserves its full weight.

Lopez-Lira’s framework built a realistic synthetic stock market with a persistent order book, market and limit orders, partial fills, dividends, and equilibrium clearing. LLM agents were given varied strategies, varied information sets, and varied starting capital. They submitted standardized trading decisions using structured outputs while expressing their reasoning in natural language.

The LLMs generated returns of over 3000% in simulated trading environments.

Read that again. 3000%. Across the simulation, these AI agents did not just marginally outperform. They discovered pricing inefficiencies, provided liquidity strategically, figured out when other agents were likely to act and positioned themselves accordingly. They were, by the metrics of the experiment, extraordinary traders.

And I find that genuinely exciting. I have spent years believing that the combination of language understanding and financial reasoning would eventually produce something remarkable. Here it is. An LLM that can read news, interpret market context, form a hypothesis, execute a trade, and update its model when the hypothesis is wrong, all in a loop that runs continuously without fatigue, without emotion, without the behavioral biases that make human trading systematically imperfect.

This is not science fiction. This is a paper published in April 2025, with follow-up discussion circulating actively through quant research communities and regulator briefings in 2026.

So why am I worried?

Because the Third Finding Is the One That Keeps Me Up at Night

Here is the problem, stated as plainly as I can manage.

LLM behavior is highly sensitive to prompts, which can lead to correlated actions across agents. This correlation may amplify volatility and introduce systemic risks.

Let me translate that from research language into something you can feel.

Imagine you have 500 traders in a room. They all went to different universities, read different books, developed different intuitions, have different risk tolerances, different time horizons, different emotional histories with markets. When news breaks, they will disagree. Some will buy. Some will sell. Some will wait. That disagreement is not inefficiency. That disagreement is the market functioning correctly. The diversity of human opinion is the mechanism by which prices incorporate information accurately over time.

Now imagine those 500 traders were all trained on the same data. They all read the same internet. They all absorbed the same financial theory. They all developed their reasoning from the same corpus of human knowledge. When news breaks and they each independently reason about what it means, they will, in ways neither they nor you can fully detect, tend toward the same conclusion.

Not because they are communicating. Not because they are coordinating. Because they share a prior. The same training, the same architecture, the same probabilistic weights on how financial language maps to financial outcomes.

When they all sell, they all sell at the same time. When they all buy, they all buy together. And the market, which was supposed to be a mechanism for aggregating diverse information into accurate prices, becomes instead a hall of mirrors. The same signal, reflected everywhere at once.

Lopez-Lira’s research confirmed this is not theoretical. It happened in the simulation. The LLM agents created bubbles, underreaction to certain signals, and synchronized behavior that produced market instabilities that no individual agent intended and no individual agent could have predicted.

Why This Matters More Than You Think It Does Right Now

I want to be precise here, because I am not trying to scare you. I am trying to give you a map.

LLMs are already being used in financial markets. Man Group’s Man Numeric deployed AlphaGPT, which autonomously generates trading hypotheses and has already produced dozens of live signals. Point72, D.E. Shaw, Citadel, and virtually every major quantitative fund is actively integrating LLM-driven research tools into their signal generation pipelines.

Most of these firms are responsible. Most of them have human oversight in the loop. Most of them are thinking carefully about the governance questions that come with autonomous AI systems in live trading environments.

But here is the thing about systemic risk that makes it different from individual risk. You do not need every firm to be irresponsible. You do not even need most firms to be irresponsible. You need enough firms, each making individually reasonable decisions, to end up in a configuration where the correlated behavior emerges at the market level.

This is precisely what happened with volatility targeting in October 2025, which we covered in our earlier piece on secretive quant rules. Each firm was running a sensible risk management model. Collectively, those sensible models created a synchronized forced selling event that wiped out $19 billion in leveraged positions in a single afternoon. No individual firm caused it. The architecture caused it.

LLM-driven trading, at scale, across multiple large funds that are each independently running similar model families, is that same architecture, but with a much more complex and much less transparent correlation mechanism underneath it.

The volatility targeting correlation was relatively legible. When volatility spikes, funds sell. You can model that. You can anticipate it. You can, with enough preparation, protect against it.

The LLM correlation is not legible. It is embedded in the weights of models trained on trillions of tokens of human knowledge. It emerges from the way language models reason about uncertainty, about momentum, about value, about risk. You cannot write it down in a formula. You cannot back-test it against historical data because it is a new phenomenon with no historical precedent. You will find out it exists when you see the market do something that no individual participant intended and cannot explain after the fact.

The Part of This That I Find Genuinely Heartbreaking

I want to step away from the technical framing for a moment, because there is something human in this story that I do not want to skip past.

The people building these systems are not reckless. I have met some of them, read their papers, followed their thinking across years. They are careful researchers who are genuinely worried about the same things I am worried about. Lopez-Lira published this research precisely because he wanted regulators and practitioners to understand the risk before it materialized at scale, not after. He presented it to European regulators. He put it on arXiv where anyone can read it. He is doing the responsible thing.

And yet the gap between “we published the warning” and “the industry absorbed it and changed its behavior” is historically very wide in finance. The mortgage market had published warnings about subprime concentration risk before 2008. The quant community had documented crowding risk in factor strategies before the August 2007 quant quake. The warnings exist. The behavior continues. Because the incentives to deploy the technology are immediate and individual, and the risks are diffuse and systemic.

If I build an LLM trading system that outperforms the market, I capture the returns. If that same system, combined with similar systems at 30 other firms, contributes to a correlated crash, the loss is distributed across the entire market. The incentive structure points toward deployment and the risk structure points toward caution, and in most cases, deployment wins.

This is not a story about bad actors. It is a story about a structure that makes individually rational decisions collectively dangerous. And the people building these systems are as trapped in that structure as anyone.

What the Research Actually Recommends. And Why It Is Harder Than It Sounds.

Lopez-Lira’s paper ends with a note about careful testing before real-world deployment and the need to understand how prompts can generate correlated behaviors affecting market stability.

Those are the right recommendations. They are also profoundly difficult to operationalize.

How do you test for correlated behavior at the market level when the correlation only emerges at scale, across multiple independent firms, in a live market environment? You cannot replicate that in a lab.

The simulation Lopez-Lira ran is impressive and valuable, but it is still a simulation. Real markets have real heterogeneity, real noise, real humans making irrational decisions that break any model’s assumptions. The correlated behavior may be more muted than the simulation suggests. Or it may be more severe. We do not know yet because we have never run this experiment at full scale in a live environment.

The honest answer from the research is: we are in the early stages of something whose full implications we do not yet understand, and the market will be the laboratory in which we eventually find out.

The firms that deploy carefully, with genuine human oversight, with active monitoring for the kinds of correlated behavior the research documents, with circuit breakers that fire on anomalous multi-agent correlation signals rather than just individual position limits, **those firms are the responsible participants in this experiment.**The firms that deploy fast because the competitive pressure is immediate and the systemic risk is someone else’s problem down the line, those are the firms that will contribute to whatever event this eventually produces.

I do not know which category will dominate. The history of financial innovation suggests the latter outnumbers the former significantly.

What I Actually Believe Will Happen. And What I Think You Should Do With This Information.

I believe LLM-driven trading will become the dominant form of systematic alpha generation within the next 5 to 7 years. The performance advantage demonstrated in Lopez-Lira’s simulation, the ability to process earnings transcripts, news flow, order book dynamics, and alternative data simultaneously and generate coherent trading hypotheses from all of it, is too large to ignore. The technology will be deployed. The question is how carefully.

I believe we will see at least one significant market event in the next 5 years that is attributable in substantial part to the correlated behavior of LLM trading agents. I am not predicting a crash. I am predicting a moment of visible, documentable correlated behavior at the LLM level that will produce a rapid regulatory response and a reassessment of how these systems are deployed. That event will be the education that the industry needs and is currently not getting from papers or regulatory briefings.

I believe the firms that survive and thrive in this transition are the ones investing now in the governance infrastructure that makes their LLM trading systems legible, auditable, and bounded. Not because they are altruistic, but because they are the ones who will not be caught in the regulatory crackdown that follows the event above.

And for you, reading this, wherever you are in your relationship to financial markets, I think the thing to understand is this.

Every pension fund that holds your retirement savings has some exposure to systematic trading strategies. Every index fund reflects prices set in markets where algorithmic trading now accounts for an enormous percentage of daily volume. The LLM trading wave does not just affect hedge funds. It affects the price of every asset in every portfolio.

You do not need to understand the mathematics of LLM agents to be affected by them. You just need to understand that the markets you invest in are increasingly shaped by systems that are capable, impressive, and subject to a kind of collective reasoning failure that has no historical precedent and no established mechanism for early detection.

That is not a reason to panic. It is a reason to pay attention to what is being built, by whom, and whether the people building it are asking the right questions before the market asks them for us.

The Thing I Keep Coming Back To

Lopez-Lira said the LLM agents exhibit “very strange, correlated trading behavior”.

Strange. That is a very specific word choice for a careful academic. Not dangerous. Not correlated. Strange. Like someone who has watched the simulation run and come away with something they cannot fully articulate. A recognition that the behavior is not wrong exactly, not broken, but not quite like anything they expected to see. Not quite like anything the models were trained on. Something new that emerged from the interaction of many intelligent systems reasoning about the same uncertain world.

We built these systems from human knowledge. From everything we have ever written about markets, about value, about fear and greed and momentum and mean reversion. We poured our entire intellectual history of finance into them and asked them to trade.

And they are very good at it. And they think, in ways we cannot yet fully see, very much alike.

I find that astonishing. I find it beautiful, in the way that complex emergent phenomena are beautiful. And I find it, genuinely, a little frightening.

Not because AI is going to take over the markets. But because the thing that makes markets work, genuine diversity of belief, the million different humans with their million different models of the world all pushing their chips into the pot at different times for different reasons, that thing is quietly, structurally, and increasingly being replaced by a smaller number of very intelligent systems that all learned to think from the same teacher.

The market will have something to say about that eventually.

I would rather we were ready when it does.

Sources: Alejandro Lopez-Lira, “Can Large Language Models Trade? Testing Financial Theories with LLM Agents in Market Simulations,” arXiv:2504.10789, April 2025; Risk.net LLM systemic risk reporting, April 2026; Harbour Front Quant Substack, “Large Language Models in Trading: Models and Market Dynamics,” April 2026; arXiv survey “The New Quant: A Survey of Large Language Models in Financial Prediction and Trading,” October 2025; Language Model Guided Reinforcement Learning in Quantitative Trading, August 2025; Informa QuantMinds “Quant Investing in 2026: What’s Driving the Industry Forward”; CQF Institute FinTech and Future of Quant Finance poll. Nothing here is investment advice.