NIST’s critical infrastructure AI profile and the vendor governance shift

On April 7, NIST dropped a concept note for a new AI Risk Management Framework profile: Trustworthy AI in Critical Infrastructure. It targets operators in energy, water, transportation, and other CI sectors running AI in environments where failure has physical consequences.

The document itself is a concept note, not a finished standard. But the direction is clear and worth paying attention to now: NIST is building a structured framework for CI operators to define, communicate, and enforce AI trustworthiness requirements upstream to their vendors and developers.

If you work in enterprise architecture (even outside critical infrastructure), the pattern NIST is formalizing here will affect how you evaluate and govern AI vendors within 12–18 months. Probably sooner.

The pattern: governance requirements flow upstream

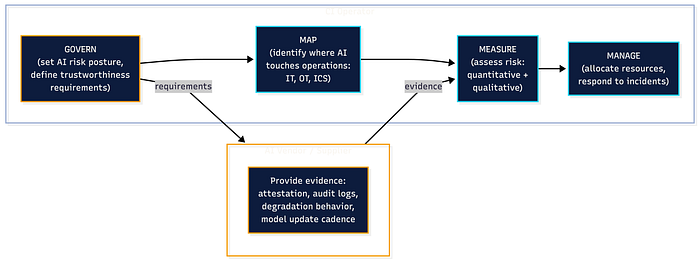

The AI RMF has 4 core functions: Govern, Map, Measure, Manage. They’ve existed since NIST published AI RMF 1.0 in January 2023. The GenAI profile (NIST AI 600–1, July 2024) layered generative AI risks on top of those same 4 functions.

This new CI profile does something different. It takes the 4 functions and reorients them around a specific relationship: the operator who deploys AI and the vendor who supplies it. The profile gives operators a structured vocabulary for telling vendors exactly what “trustworthy” means in their environment: what risks they need addressed and what evidence they expect back.

That’s the shift. Trustworthiness becomes a contractual, auditable requirement flowing from operator to vendor.

Why this matters beyond critical infrastructure

You might look at this and think: I don’t run power grids or water treatment plants. True. But NIST profiles have a pattern of their own. They start sector-specific, then become the template everyone else copies.

The GenAI profile followed the same path. Released for a specific risk category (generative AI), it quickly became the reference point for any organization trying to structure AI risk management. Procurement teams started referencing it. Audit teams built checklists from it. Compliance frameworks absorbed it.

The CI profile will do the same thing for vendor governance. The 4-function structure (Govern your AI risk posture, Map where AI touches your operations, Measure whether the system behaves as expected, Manage when it doesn’t) works for a bank evaluating an AI-powered fraud detection vendor. It works for a hospital evaluating a clinical decision support system. It works for any organization where AI enters the environment through a third party.

For junior engineers:pay attention to how the AI RMF structures risk. Govern, Map, Measure, Manage is becoming the lingua franca for AI risk conversations in regulated industries. Knowing it gives you a shared vocabulary with compliance and legal teams that most engineers don’t have.

For senior engineers and architects: the CI profile is a forcing function. If you’re evaluating AI vendors today, you’re probably doing it with a spreadsheet and some pointed questions during a sales call. The profile gives you a structured framework to push requirements upstream. What risks does this system introduce to our operational environment? What evidence can the vendor provide that those risks are managed? What happens when the system degrades? You’ve been asking these questions already. The profile gives you a NIST-backed structure to organize the answers.

3 things the CI profile signals about where vendor governance is headed

- AI trustworthiness becomes a supply chain requirement — The concept note explicitly scopes requirements across “AI and CI lifecycles and supply chains.” That’s NIST saying: you can’t govern AI risk if you stop at your own org boundary. Your vendor’s vendor matters. Their training data pipeline matters. Their model update cadence matters. Expect procurement and vendor management teams to start asking for this.

- OT and IT governance converge — The profile addresses AI in OT, ICS, and cyber-physical systems alongside traditional IT environments. Most enterprises still govern these separately, with different teams and risk frameworks. AI is the forcing function that collapses that separation, because a single AI system can touch both the control logic and the business logic. Architecture teams that still draw hard lines between OT and IT governance will need to redraw them.

- Graceful degradation becomes a first-class requirement — The concept note calls out AI systems that “degrade gracefully and transparently in response to adverse conditions while alerting human supervisors.” That’s a specific design pattern being codified into a governance expectation. If your vendor’s AI system fails silently or fails catastrophically with no fallback, that’s now a governance gap you can point to with a NIST reference behind it.

What to do with this on Monday

If you’re an enterprise architect or engineering leader evaluating AI vendors, 3 concrete actions:

- Pull the NIST concept note (it’s 10 pages, published April 7, 2026) and read sections on governance and supply chain. Even if you’re not in a CI sector, the vendor governance structure is directly applicable. Link: nist.gov/programs-projects/concept-note-ai-rmf-profile-trustworthy-ai-critical-infrastructure.

- Map your current vendor evaluation process against the 4 AI RMF functions. Where do you have coverage? Where are you relying on vendor self-attestation with no independent verification? That gap is where the profile’s structure adds the most value.

- Ask your AI vendors one question: “What evidence can you provide that your system degrades gracefully under adverse conditions?” The answer (or the silence) will tell you a lot about their maturity.

The pattern

NIST profiles start as sector-specific guidance. They become cross-industry expectations. The CI AI profile formalizes what enterprise architects have been doing informally: pushing trustworthiness requirements upstream to vendors with specific, auditable criteria.

The concept note is a starting gun. The final profile will take months to develop through their Community of Interest process. But the direction is set. If you’re building your AI vendor governance process from scratch right now, building it on the AI RMF’s 4-function structure is the safest architectural bet.

The teams that treat this as “critical infrastructure only” will be retooling when their own regulators, auditors, or customers start asking the same questions. The teams that borrow the structure now will already have answers.